How To Filter By Word In Stata

Stata is a skillful tool for cleaning and manipulating data, regardless of the software you intend to use for assay. This workshop is suitable for both offset time data-cleaners and for those familiar with data cleaning.

Table of Contents

- Some useful tips before you get started

- Creating a number of smaller subsets based on research criteria

- Dropping observations

- Dropping variables

- Transforming variables

- Dealing with outliers

- Creating new variables

- Moving variables

- Labelling variables

- Renaming variables

- A few last words

Cleaning information is a rather broad term that applies to the preliminary manipulations on a dataset prior to analysis. It will very often exist the first assignment of a enquiry assistant and is the tiresome part of any research projection that makes us wish we HAD a research assistant. Stata is a expert tool for cleaning and manipulating data, regardless of the software y'all intend to employ for analysis. Your get-go laissez passer at a dataset may involve any or all of the following:

- Creating a number of smaller subsets based on research criteria

- Dropping observations

- Dropping variables

- Transforming variables

- Dealing with outliers

- Creating new variables

- Moving variables

- Labeling variables

- Renaming variables

Whether this is your showtime time cleaning data or you lot are a seasoned "information monkey", you might find some useful tips by reading more.

Some useful tips before you get started[1]

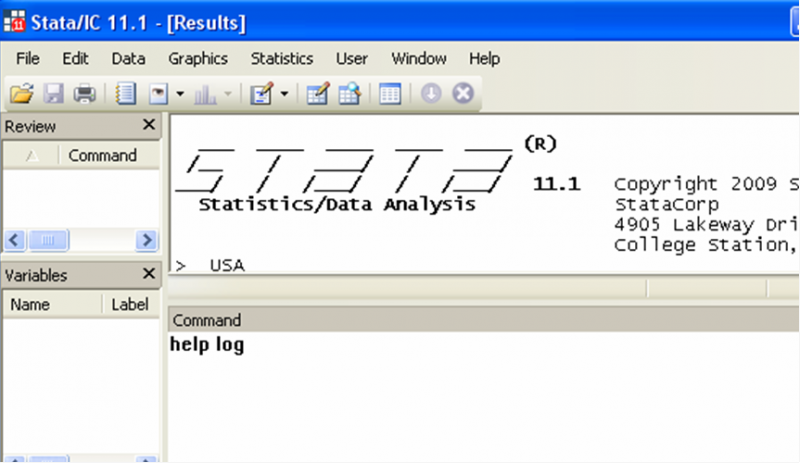

Use the Stata aid file. Stata has a built in feature that allows you lot to access the user manual as well as help files on any given control. Simply type "aid" in the command window, followed by the name of the control you need aid with and press the Enter key:

Write a do file. Never clean a dataset past blindly inbound commands (or worse, clicking buttons). You desire to write the commands in a practise-file, and then run it. This way, if you make a mistake, you will non have ruined your entire dataset and you will non need to first once again from scratch. This is a general advice that applies to any work you do on Stata. Working from do-files lets other people come across what you did if y'all ever need communication, information technology makes your work reproducible and it allows you to correct small mistakes somewhat painlessly.

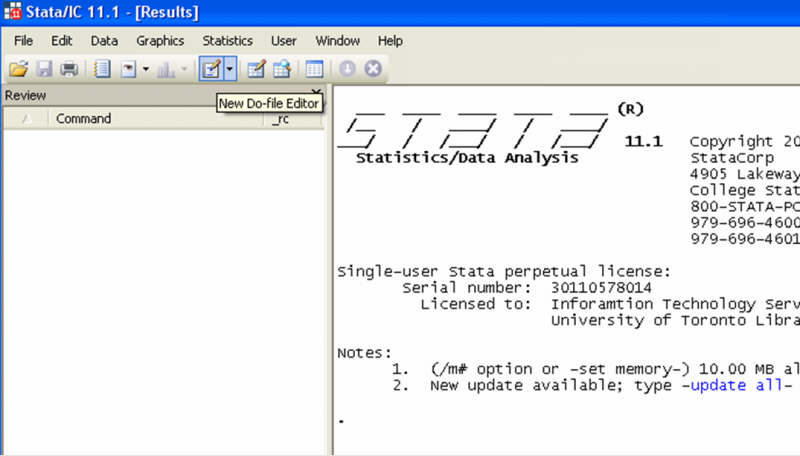

To get-go a practice-file, click on the icon that looks similar a notepad on the top-left corner of your Stata viewer[2].

In the preliminary stages of your piece of work, you may feel that a do-file is more than hindrance than it is useful. For example, if you are not so familiar with a command, you may prefer to endeavor it start. 1 elementary manner to do that and even so have field of study about writing exercise-files is to write your exercise-file in stages, writing only a few commands earlier executing them, correcting mistakes as you go. In lodge to execute a number of commands rather than the whole do-file, merely highlight the ones you desire to execute, and click on the "Execute Choice (exercise)" icon on the peak of your do-file editor, at the far right.

As you become more skilful with programming in Stata, yous won't demand to endeavor out commands anymore, and you'll discover the joy of writing a do-file and having it run without a glitch. To run a whole do-file, practise non highlight whatever part of it and click on the "Execute Selection (do)" icon.

You may wonder virtually the commands "articulate", "set more off" and "set mem 15000" in the screenshot case. These 3 commands are authoritative commands that are quite useful to accept at the offset of a practice-file. The first, "clear", is used to clear any previous dataset you may accept been working on. The control "set more than off" tells Stata not to pause or display the --more-- bulletin. Finally, the command "set mem 15000" increases the memory available to Stata from your computer; hither we will need it every bit the size of the information set nosotros downloaded from <odesi>[iii] is larger than the 10mb allocated to data by default.

One last comment virtually do files: if y'all double click a saved exercise file, information technology will not open for editing, but rather Stata will run that do-file, which can be a scrap annoying… To reopen a do-file from a binder without executing the commands in it, correct-click on it and select "edit" rather than "open".

Always keep a log. Again, this is a full general rule of pollex on Stata. Keeping a log means y'all can get dorsum and expect at what you did without having to do information technology again. Starting a log is just a matter of adding a control at the top of your do-file that tells Stata to log, every bit well every bit where you want the log to be saved:

log using "whateverpathyouwant:\pickanameforyourlog.smcl"[4], supercede[v]

Notation how logs are saved under the smcl extension.

Do non forget to close your log before starting a new one. The concluding command on your do-file[6] will usually be "log close".

Salvage as y'all go. Computers crash, power goes out, stuff happens. Save your do-files every few minutes every bit you write them. Saving a do file is done the same manner as saving any text editor document: either click on the diskette icon, or press "CTRL+S":

You should also save your dataset every bit you lot modify it, but make sure to go on ane version of the original dataset, in case y'all need to commencement over. The command to save a dataset on Stata is "save", followed by the path where you lot want the dataset to be saved, and the [optional] command "supplant".

Note how the extension for Stata data is ".dta", and too note how the new dataset has a dissimilar name from the original[7].

Become familiar with your dataset. Datasets come with codebooks. You should know what each variable is, how it's coded, how missing values are identified. A good practise is to actually look at the data, then that you lot sympathize the structure of the information. To do so, you lot tin click on "Information" in the top-left corner of your viewer and select Data editor, then Information editor (scan). A new window volition open up and you can see your data.

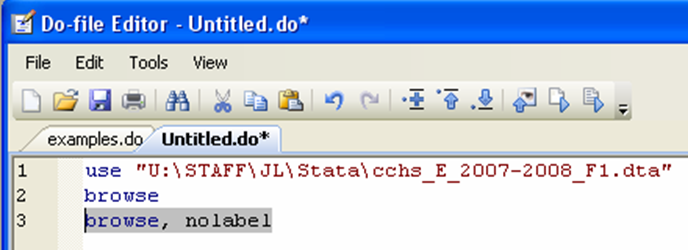

You can also use the control "browse", either past typing information technology straight in the control window, or from a practice file:

1 of the distinguishing features of <odesi> is that when you download a dataset, it comes with labels. Variable labels are descriptions of variables, and value labels are used to describe the way variables are coded. Basically, the value label sits on summit of the code, so that when yous browse, yous run into what the code means rather than what information technology is. To make this clearer, let'south await at the information with no labels. Look, for example, at the GEOPRV variable.

Dorsum to top

Creating a number of smaller subsets based on research criteria

There are many reasons why you lot may want a smaller subset of your data simply the main 1 is that the bigger the dataset, the harder it is for Stata to manage, which slows down your system. Your goal is to brand your dataset equally small as possible, while keeping all the relevant information. Your research calendar determines what your terminal dataset will incorporate.

Permit's say you accept data on the wellness habits of Canadians aged 12 and upwards, only your enquiry question is specific to women of reproductive age living in Ontario[8]. You clearly don't demand to keep the men in your dataset, and you won't demand to keep the residents of provinces other than Ontario. Furthermore, you can probably drop women under fifteen and over 55 years old. Now, allow's look at how y'all would practise that.

Back to superlative

Dropping observations

To drib observations, you lot need to combine i of two Stata commands (keep or drop) with the "if" qualifier.

Make certain you lot have saved your original dataset before you get started.

The "continue" control should be used with circumspection (or avoided altogether) because information technology will drop all merely what y'all specifically keep. This tin can be a trouble if you lot are not 100% certain of what you want to keep.

The "drop" command will drop from your dataset what you lot specifically enquire Stata to drop.

The "if" qualifier restricts the scope of the command to those observations for which the value of an expression is truthful. The syntax for using this qualifier is quite simple:

command if exp

Where command in this case would be, driblet and exp is the expression that needs to be true for the "drop" command to apply[ix].

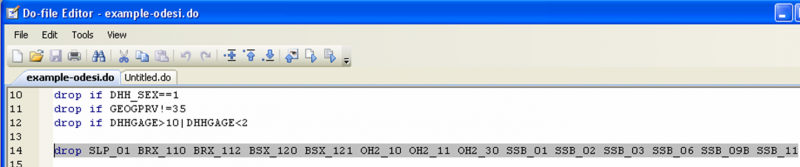

Using the case of women of reproductive age in Ontario, the first highlighted line drops men, the second line drops any observation non in Ontario, while the last line drops observations in age groups older or younger than our subset of interest.

You have to be conscientious with logical operators; notice the syntax in the third line. A mutual mistake is to enquire Stata to "drop if DHHGAGE>10&DHHGAGE<two". In that location are no individuals in the dataset who are older than 55 AND younger than 15. We want to drib if older than 55 OR younger than 15.

Here is a list of operators in expressions. You would mostly apply logical and relational operators in conjunction with "if":

Dorsum to acme

Dropping variables

Another way in which yous may need to make your dataset smaller is past dropping variables that are not useful to your enquiry. It may be that the information contained in a given variable is duplicated (i.e. another variable provides the aforementioned info), or maybe all the observations for a variable are missing, or a variable only happens to exist in your dataset but is irrelevant to your research. Dropping variables is very straightforward; merely use the "drop" command.

Looking at the data from CCHS, the variable SLP_01 (Number of hours spent sleeping per nighttime) is coded equally ".a" (NOT Applicative) for each observation in the dataset.

Clearly nosotros will not acquire annihilation from that variable, so we can drib it. The syntax for dropping variable is uncomplicated:

drop varlist

Where varlist is the list of variables you would like to drib. It's piece of cake to drop a number of a variable at a time this way. Here I am dropping all the variables that were coded as Not Applicative for more than 95% of observations[10]:

Back to top

Transforming variables

Sometimes variables are not coded the mode yous desire them to exist. In this section we will look at 2 transformations y'all may need to do on some variables earlier using them: recode and destring.

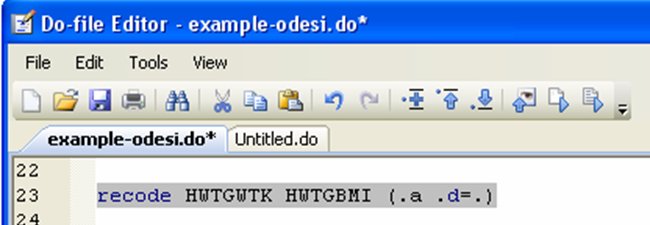

The "recode" command changes the values of numeric variables according to the rules specified. In the CCHS dataset, many variables have missing values coded as ".a" or ".d". This is user-friendly because it will non affect calculations yous might do using the data (for example if you lot summate an average). However, many datasets use 999 as a missing variable lawmaking, and that might be problematic. We might want to recode these every bit "." in order to non have them touch on any calculations we plan on doing with the data. The syntax for this control is:

recode varlist (old value(southward)=new value)[xi]

Allow'south recode the elevation and BMI variables from the CCHS data, (for the sake of analogy, since information technology'southward actually not necessary in this instance):

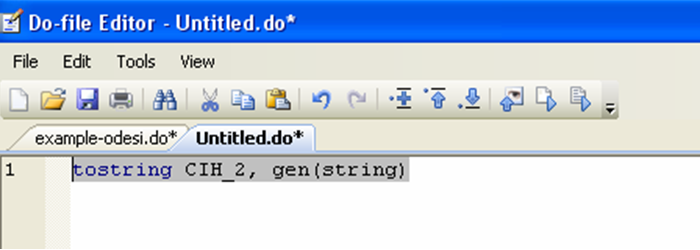

The "destring" command allows yous to convert information saved in the cord format (i.e. alphanumeric) into a numerical format. The CCHS dataset does non contain any string variable. In guild to see what a string variable looks like, we can apply the converse command, "tostring", to create a string variable. We will and then catechumen that variable back to a numerical format.

A string variable shows up in cerise in the information editor:

Although it may look the same equally the variable CIH_2, Stata cannot do any calculations on the string variable (since its format is telling Stata that information technology is fabricated of letters or other symbols). Let's destring it:

Discover the employ of the options "generate" and "supplant". When we created the imitation string variable, we used "generate" because nosotros wanted a new split variable. At present, when we destring, we are replacing the string variable by its numerical counterpart. How you cull to exercise this in your own dataset depends on how you plan to use the variables. Will you still accept any use for the string variable? If and then generate a new one when you lot destring. Do you only want that variable to not be in string format? And then supercede information technology with the new i.

Here, we can see that our variable string is now completely identical to the variable CIH_2:

(We tin can drop that variable at present)

Dorsum to top

Dealing with outliers

Outliers deserve their own section considering at that place is often confusion as to what exactly constitutes an outlier. An outlier is Non an observation with an unusual but possible value for a variable[12]; rare events practice occur. The outliers you should be concerned about are the ones that come from coding mistake. How do you tell which is which? Common sense goes a long manner here.

Beginning, wait at your data using the data editor (browse). Outliers tend to jump at yous. If you accept a small dataset, you tin also tabulate each of your variables:

tab varlist[13]

Tabulating a variable will requite y'all a list of all the possible values that variable takes in the dataset. Outliers will be the extreme values. Wait at the social club of magnitude. Are these values believable?

If the dataset is very big, however, information technology may not be applied to stare at all the values a variable can take. In fact, Stata will non tabulate if there are besides many different values.

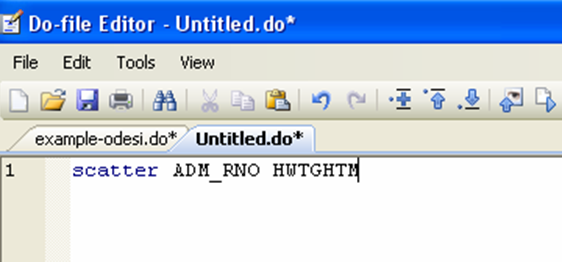

You can look at your data in a besprinkle plot:

In the CCHS dataset, caseid is the private id, while hwtghtm is the height in meters. The graph tells us there are no outliers in this dataset:

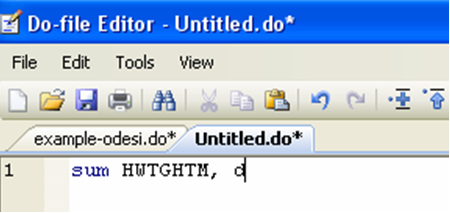

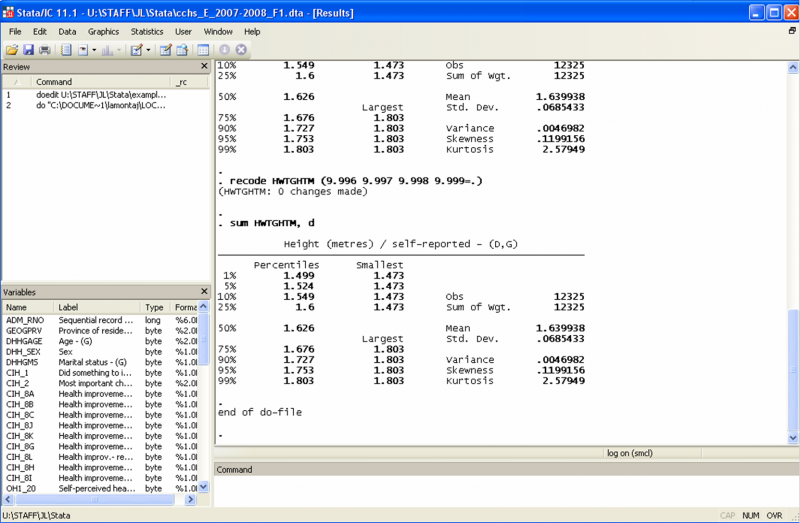

Some other way to wait for outliers is to summarize the observations for a variable, using the detailed option:

The event window volition show the main percentiles of the distribution (including the median – fifty%), the beginning iv moments, every bit well as the iv smallest and four largest observations:

Clearly, there are no outliers. Allow'due south imagine for a moment that the 99 percentile of the height distribution includes an observation with 5.2m entered as the height. Is information technology plausible that there really was a 5.2m woman recorded in this dataset? Look at the order of magnitude past which this observation would differ from the 2nd largest. It'south almost 50 standard deviations bigger...

What should you lot do with such an observation? There are a number of solutions just none is perfect:

- Drop it from your dataset ("drop if hwtghtm>1.803")

- Use the "if" qualifier to exclude it when generating statistics that use the summit variable ("command if hwtghtm<=ane.803")

- Ignore it if the summit variable is not actually that of import in your research and the residuum of the variables for this observations are coded just fine

Back to superlative

Creating new variables

There are two principal commands you need to know to generate new variables: "gen" is for the basics, while "egen" allows you to get pretty fancy. You can combine these with qualifiers such as "if" or "in" as well equally prefix such as "by" and "bysort"[fourteen].

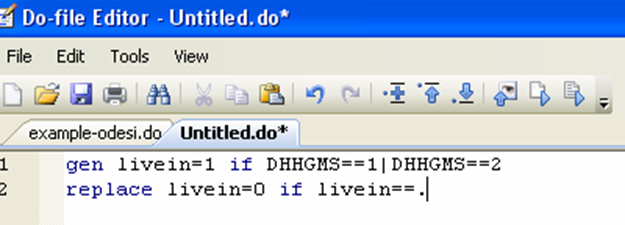

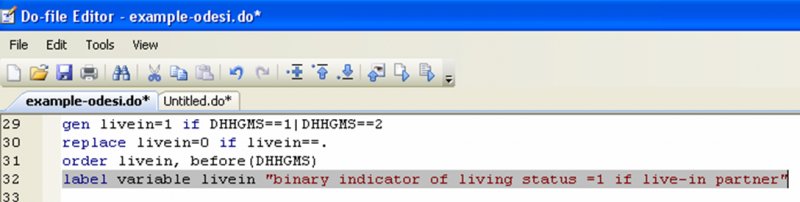

For example, say you want to create a variable that tells you lot whether the women in the dataset have a live-in partner. While there is no sure-fire mode to establish that, nosotros will approximate information technology by bold that women who indicated their marital status as married or common-law actually live with their spouse or common-law partner:

The beginning line creates the variable "livein" and assigns it a value of 1 if the value of the marital condition variable (dhhgms) is either one (married) or 2 (common-constabulary). The 2nd line replaces the missing value code by 0, making the "livein" variable binary.

Now, let's say y'all would like to create a categorical variable that tells you, by age grouping, if a adult female is below or in a higher place average in terms of torso mass index (BMI).

The beginning line of command creates a variable (meanbmi) which takes on a unique value for each age group, the average BMI for that age group. The prefix "bysort" is a combination of "by" and "sort"; yous could equivalently break information technology into two commands:

sort DHHGAGE

by DHHGAGE: egen meanbmi=mean(HWTGBMI)

The "sort" part of the command organizes the observation according to the variable DHHGAGE, from smallest to largest, a step required before doing any activity "by" the variable. It's usually easier to merely utilize "bysort".

The second and third lines (starting with "gen") create a binary variable which equals 0 if an ascertainment has a BMI lower than the boilerplate for her age group, and i if her BMI is to a higher place her age group average.

Back to tiptop

Moving variables

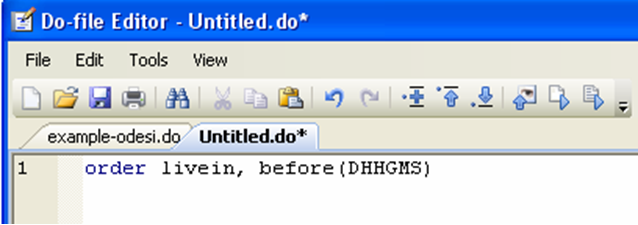

Now that you accept created these new variables, information technology would exist nice to make sure that the rules past which y'all generated them was correct. Ideally, you lot would similar to look at livein (the new variable based on marital status) and dhhgms (the marital status variable). However, information technology'south hard to compare two variables unless they are side by side. You can use the "lodge" command to movement a variable (i.east. move a column of your dataset).

When you create a variable, by default it becomes the terminal column of your dataset. You can move it next to another variable instead:

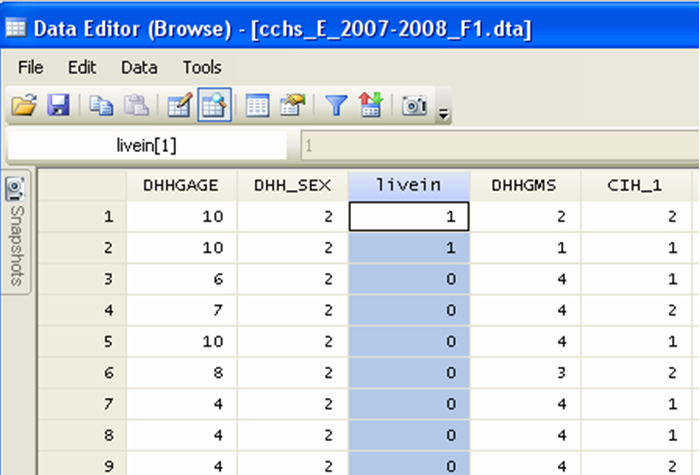

Now if we await at our dataset, nosotros tin encounter compare the new variable to the sometime and make certain that we coded it properly:

Similarly, since our ii new variables pertaining to BMI are now the last columns, permit's move the original BMI variable to the end of the dataset:

Information technology now piece of cake to glance at our new variables:

Practise you notice the problem on line 8? The variable bmicat should not be coded 1 if the original BMI variable is coded as a missing value. We can gear up this with a quick replace:

replace bmicat=. if hwtgbmi==.d

Dorsum to top

Labelling variables

Whenever you create a new variable, it is a good idea to characterization it. Why? Having your variables labeled makes it piece of cake for you or anyone else using your dataset to quickly see what each variable represents. Yous should think of your piece of work as something that people should be able to reproduce. Labeling your variables is a small job that makes it much easier for others to apply your data[15].

The syntax for labeling variables is as follow:

label variable varname "label".

In our previous example, the command would look like this:

Note that you can abbreviate this control to lab var:

Back to superlative

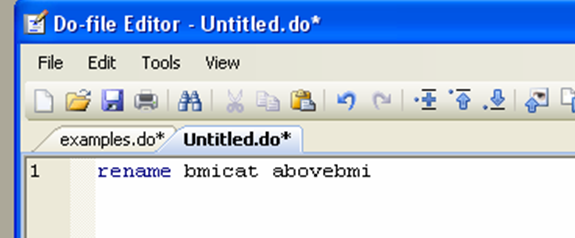

Renaming variables

You may find that you work faster if your variables have names that you recognize at commencement glance. In most cases this is by no ways a necessary task in cleaning information, simply if you lot use data from another country, for example, you may find that the variable names are in a foreign language, making it very hard to remember. The syntax is every bit easy every bit can be:

rename oldname newname

Permit'southward meet the last exercise-file

Your exercise-file may exist slightly different from this but information technology should upshot in the same final dataset:

Allow'south try running it in one go to see if information technology works. Do non highlight any command and click on Execute (Practice). Note that whenever Stata encounters the command "browse" a data editor will pop up on your screen. Have a look at your data then close the data editor in lodge for Stata to continue running the do-file.

Let'south also take the fourth dimension to open our logs to see what it looks like and how it could be useful.

Finally allow's look at our final datasets and make sure it contains all the correct variables, in the right format.

Dorsum to summit

A few concluding words

This concludes our workshop merely information technology's merely the beginning for y'all. Learning to use statistical software involves a lot of trial and error, aroused googling, and desperately trying to discover someone who knows how to write a loop… Listed below are a few excellent resources to further your working knowledge of Stata:

UCLA: http://www.ats.ucla.edu.myaccess.library.utoronto.ca/stat/stata/default.htm

Princeton: http://information.princeton.edu.myaccess.library.utoronto.ca/stata/default.html

http://world wide web.princeton.edu.myaccess.library.utoronto.ca/~otorres/Stata/statnotes

LSE: http://personal.lse.ac.uk.myaccess.library.utoronto.ca/lembcke/ecStata/2009/MResStataNotesJan2009PartA.pdf

http://personal.lse.ac.u.k..myaccess.library.utoronto.ca/lembcke/ecStata/2009/MResStataNotesFeb2009PartB.pdf

University of North Carolina at Chapel Hill: http://www.cpc.unc.edu.myaccess.library.utoronto.ca/research/tools/data_analysis/statatutorial

Stata: http://www.stata.com/support/faqs/

Dorsum to top

[1] At that place is an assumption here that yous already have a dataset. If y'all practise not and yous need aid assembling data, please visit the data library (THIS Annotate NEEDS TO REFERENCE THE GUIDE ON HOW TO DOWNLOAD A DATASET FROM SDA)

[two] You can use other text editors to create and manage practise-files. For case, Smultron is an open-source software that works well with Stata.

[iii] You tin come across the size of a data set by right-clicking on it, then selecting "backdrop".

[4] You should create a folder in an piece of cake to remember location (desktop works well) for your Stata work. Then check its properties by correct-clicking on it, and re-create the location. That's your path.

[five] ",replace" is optional here just rather useful if you want to continue simply i log per practise-file. If you don't take the ",supervene upon" control, you lot will demand to modify the proper name of the log every fourth dimension you run the exercise-file.

[half-dozen] Nevertheless, if a do-file is interrupted considering of an error and a log is open, yous volition need to shut it before running the same do-file over again, because i of the kickoff command of the practice file is to commencement a log, which will result in an error message unless the previous log is airtight. Just type the command "log shut" in the command window, or highlight it and execute from your do-file.

[7] Note to users of this guide: this control would typically be located towards the end of the do-file. I have created a screenshot hither with a new exercise-file but to evidence one command lonely. All the examples in this guide that similarly apply a new practise file with but one command were done that way to save space. The goal of this workshop is to learn to create a cleaning practise file, in which commands are listed one later on the other. I trust that users tin sympathise the commands well enough by the end of the workshop to assemble them in the order that is logical for the purpose of their own job.

[viii] The examples in this guide were created using a customized subset of the Canadian community wellness survey (CCHS), annual component, 2007-2008, available through the Data Liberation Initiative (DLI) and downloaded using SDA@CHASS.

[9] See the Stata help files on expressions and operators: type "help exp" and "help operator" in the control screen.

[10] There is no rule of pollex at play hither; I simply picked a listing of variables that independent little useful information. Sometimes, the fact that only a minor number of observations incorporate data IS informative, in and of itself. Do not driblet variables that tell yous something of import.

[11] Note that yous can likewise use this control to make groups. The CCHS dataset already has historic period by historic period grouping but if you had a variable for actual age, yous could generate an age group variable using recode. See the Stata help sheet (help recode) for more options.

[12] Admittedly, these are indeed outliers, just not the blazon we desire to exercise anything about. Leave those solitary. "Dealing" with truthful events in any way is likely to do more damage than good as you would truncate your dataset, potentially creating bias in your analysis later.

[thirteen] You replace "varlist" with the list of the variables you desire tabulated, as in the drop instance.

[xiv] All of these commands, qualifiers and prefixes have Stata help files. Have a look at them for a more in-depth presentation.

[xv] Knowing how to characterization variables can also be useful if the information was not provided to you with a dictionary file; you tin can so use the questionnaire to build labels for all your variables of interest, simply as a lexicon file would do.

Back to tiptop

How To Filter By Word In Stata,

Source: https://mdl.library.utoronto.ca/technology/tutorials/cleaning-data-stata

Posted by: baggettvirs1976.blogspot.com

0 Response to "How To Filter By Word In Stata"

Post a Comment